The first picture of a black hole is probably one of the most exciting developments in the world of science. The blurry ring of fiery orange might not seem difficult to produce. In fact, it involved years of effort by an international team of scientists, including computer scientists.

Reading the account, I am excited about the role Python played in this endeavour. This is interesting because when we talk about a scientific discovery we usually talk about the people - the scientists who made leaps of intuitions and found correlations that no one else had. But increasingly technology is playing a significant role in discoveries by sifting through enormous amounts of data and extracting valuable insights.

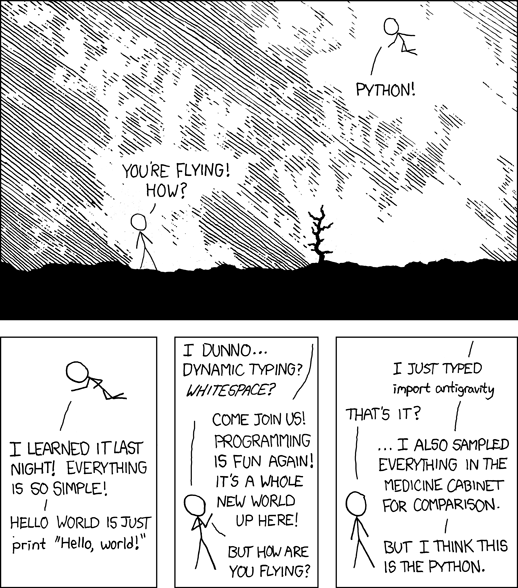

Python’s popularity in the scientific computing would not be a surprise to most Python programmers today. But back in 2009 when I attended the first PyCon India in IISc Bangalore, I was surprised to see talks on experimental Physics and fluid simulations. When I asked Prof Prabhu on why Python is so popular in scientific computing, he said “it is very accessible to us – non-programmers”.

Casually browsing through the software used by the astronomers, you will find mentions of Python libraries like Numpy, Scipy, Matplotlib, Pandas and Jupyter. Remarkably, entire projects such as eht-python are written only in Python. Python is not just the language of choice, it is the lingua franca of scientific computing.

Yet, if you think about it, there are better programming languages be it in terms of - speed, type safety or brevity. But Python overcomes these limitations and sometimes succeeds due to some pragmatic language design decisions.

Speed Can Be Delegated

Ironically, plain Python code can perform very poorly for computation intensive tasks. But libraries like NumPy are de facto when it comes to any form of number crunching. It provides an N-dimensional array object with several high level operations like cross product or transpose. The C engine of the library accelerates these operations close to raw machine speed.

In the early days of Python, it was expected that performance intensive parts would be written in other languages like C or FORTRAN and a wrapper interface would be used to invoke them. Over time, wealth of libraries like NumPy made it unnecessary to write any custom C code. Why reinvent the wheel when you can just “import” and use it?

Libraries that Play Well

Working with third party C libraries is not for the weak hearted. In 2002, when I was adapting the algorithm in a paper for my project on wavelet-based image compression, I learnt this the hard way. We needed to use an existing Fast Fourier transform library written in C.

The library worked when you used it as is. But if you tried to extend a data structure, you might end up with a null pointer exception. Manual memory management by working out all the code paths turned out to be very stressful. The library was well documented but we practically needed to understand every line before tweaking it.

Eventually, we gave up and started implementing most of the project in Python. It was much easier to work with higher level data structures like dictionaries and lists without going through the dance of malloc and free. Even better, the Python code was pretty much a direct translation of the mathematics in the paper to code.

Python libraries tend to compose quite well (while C libraries don’t). This is partly due to its dynamic typing and automatic memory management, but I personally feel it is mainly due to good conventions. Most of the Python idioms are well documented and this leads to minimum surprises. For instance, a deeply nested class hierarchy is frowned upon because “flat is better”.

Interactive Exploration

Research is explorative. We not know what we may find. Even if we do, we cannot wait for ages to find out because we might be chasing a dead end. An interactive interface is a key tool for a researcher or scientist. A Jupyter notebook is close to the ideal with its live code and embedded visualization abilities.

If you need to try a computation with a different set of parameters, you can invoke it and view the results. Even plot it to visualize it better. Then you could take the results and feed it to another computation. This recorded transcript is a valuable data pipeline that can be replayed by a different user for verification or with a different set of observations.

If you think about it, a conversational interface could be more approachable to a non-programmer. Alan Kay was very impressed with an early interactive programming environment called JOSS developed in RAND that appealed to economists. I find it endearing that it replied to any command it did not understand with a “Eh?” or “SORRY”.

Imagine using today’s voice recognition technology to build such a conversational virtual assistant for scientists. Considering much of science (especially physics) involves mathematics – spelling out complex equations can quickly get tedious. Listening to tables of numbers is no fun either. So unless the conversation steps up with an amazing level of artificial intelligence (imagine a reply like “I have run simulations on every known element, and none can serve as a viable replacement for the palladium core."), we are probably stuck with current interfaces.

Future of Python

M87 EHT project involved processing petabytes of information (which is publicly available). They plan to add new telescopes in the future, increasing the volume of data by orders of magnitude. In general, the computational demands of science will keep growing and even enter new domains. The question is - will Python keep up or get replaced?

Python has a strong ecosystem with hundreds of libraries. It will be hard for another language to reproduce that. It is a very easy language to pick up. The readability is so good that Python code is often compared to pseudocode. I believe, it has changed the expectation of how code should look like. Any new language should have equal or better readability to inspire a switch.

While there are several other promising languages like Julia or Rust, I am confident that Python will remain the scientist’s favourite programming language for a long while. Despite its limitations, Python has found a sweet spot between ease and power.

Every year technological progresses keeps accelerating. This can translate into progress for humanity if we can make technology more accessible. We need physicists, mathematicians, biologists, economists, farmers and so on to use cheap computing power to build better things.

Python does play a significant role by making coding less intimidating and more collaborative. That’s why I believe you will see it in bringing more people to computers and being a part of more future breakthroughs.