I used to think of Procrastination as a villain. If I had to complete a task before a deadline next week, I would not start work until the deadline gets uncomfortably close. The work ends up being more rushed than if I had used the entire week. Procrastination often robs the opportunity of producing better quality work.

I do not sense Procrastination as an urge or a feeling. It is rather the absence of the feelings like I need to start working on something. Frankly, it is also the knowledge that I could start but probably not now. To procrastinate is both a passive activity where I am not thinking about a task due for the future and an active choice to work on seemingly more urgent tasks due in the near future. Hence, a vicious circle.

As I get older, I realize that time is not as plentiful and readily available resource anymore. There are work and family commitments that leave only islands of time in my daily calendar. The ability to pull an all-nighter is not practical as you age since a sleep deficit cannot be easily overcome anymore. So delaying tasks often means unfinished tasks or lost opportunities. Procrastination has become a more urgent problem for me than ever.

But again, it is not accurate to always characterize Procrastination as a villain. There are times when I found delaying on a creative work helped me find better ideas than I would have if I had started earlier. ‘Sleeping on a problem’ can be surprisingly effective for finding a fresh perspective for complex issues. Most creative people do not progress at a steady rate instead they work in inspired sprints and take breaks in between.

So forcing oneself to work when your mind is not ready is not very useful. Is Procrastination our mind’s defense mechanism against attempting to solve poorly understood problems? Maybe. This is why there is no one-size-fits-all solution to everyone’s Procrastination problems.

But I will share some of my techniques below that have helped me solve this problem to a large degree.

Digital Minimalism

Most of us spend an incredible amount of time on our smartphones. Many apps are designed to hook you into spending the most amount of your time so that they can monetize it effectively. Watch out for such apps. In my experience these apps are of the following kinds:

- Social networking apps: e.g. Facebook, Instagram, WhatsApp, Twitter. I have uninstalled most of them except WhatsApp and Twitter.

- Entertainment apps: e.g. YouTube, Netflix, Amazon Prime, Games. I watch only highly recommended or very promising content. I set aside weekends and holidays for movies or longer shows.

- News apps: e.g. Hacker News, Reddit, Newspapers. These are essential to keep myself updated but it is very easy to get lost in rabbit holes and doom scrolling.

Your list of apps might be different. Now most phones come with well being apps that tell you how many times and how long you use each app. Start from that and do a self evaluation of whether you want to spend so much time on each app. Turn off notifications from all apps except the ones you really, really need.

Today your time is a precious resource that must be protected from or mindfully shared with such apps. So it is not enough to practice self-control, you might need to modify your environment by uninstalling the least essential ones or time limit your access to such apps.

I saved a lot of time everyday by practicing digital minimalism. Procrastination is also reduced due to less availability of such easy time sinks.

Recommended Reading:

- Digital Minimalism: Choosing a Focused Life in a Noisy World - Helps you understand why you need to minimize digital stuff

- Atomic Habits: An Easy & Proven Way to Build Good Habits & Break Bad Ones Systematic changes rather than big bang approaches to bring change

Paper TODO lists

There are a million TODO apps. They are all horrible. Because any digital device has a notification or a tempting app waiting for you even if you have followed my advice of turning off most notifications.

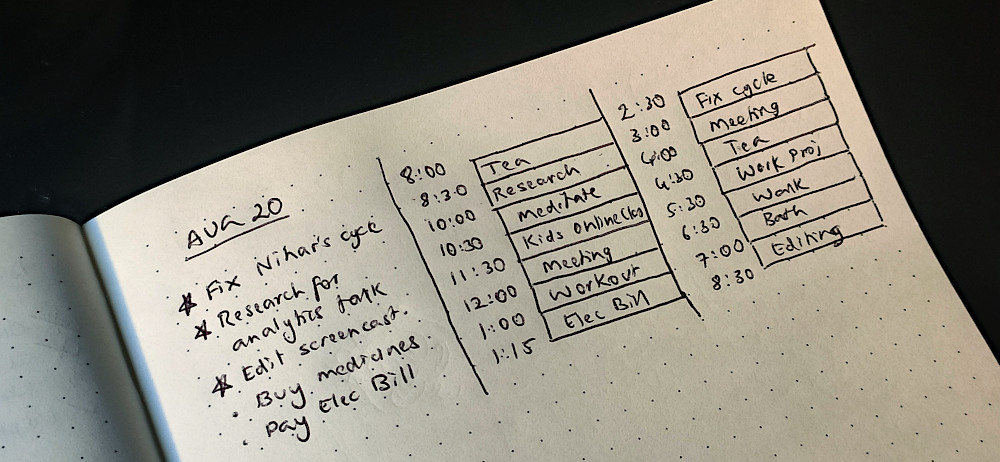

Paper is the best alternative. After trying all sorts of approaches I have settled on writing my daily TODO in a A5 size notebook. The hardest part is to build a daily habit of opening the notebook and groom your TODO list. But it is worth it.

Paper has the following advantages:

- Avoids distractions: Remember taking your phone for noting down something, getting sidetracked into several apps and eventually forgetting why you took the phone in the first place?

- Writing down helps remember: To me writing with a pen is slower than typing. But that deliberate process of writing helps me remember better than typing. Keeping TODO list items in your memory is important because most of the planning and replanning of everyday tasks happens in your head.

- Spatial memory: Sometimes I remember that I wrote something 3 months back in my notebook and can recall specifics like it was on the left side, written in red ink or had a funny Peanuts doodle next to it. Sure it is not easily searchable by keywords. But the way my memory works, it is still retrievable and a lot more likely to be retained in my mind.

- Fun and Creative: Try adding a funny doodle next to an item in a TODO app. Or try drawing the headline of an upcoming fun event in balloon letters. Paper has no limitations to your creativity. Check out galleries of Bullet Journalists. Some are probably procrastinating but a little bit of creativity is so inspiring.

Recommended Reading:

- Bullet Journal - Basics - I use a slightly modified bullet journaling method to manage my TODOs

Timeboxing

Imagine you somehow manage to start working on a project several days in advance. One hour later, to your extreme frustration - the page is still blank. This paradox of having abundant time yet no progress can be understood if you know the Parkinson’s law - “work expands so as to fill the time available for its completion”.

I found the best way to tackle this problem is to use timeboxing. Take any project and break it down into clear and actionable tasks that have a limited time to complete. Some tasks might seem clear but could be complex and involve multiple steps. For example, “Write an Essay” can be split into “Research”, “Outline”, “Draft” and “Review” tasks.

Studies show that time-boxing is the most effective way to beat Procrastination. Just the act of dedicating time to a task ensures clarity and focus. I find the limited time aspect of time boxing to be simultaneously reliving and motivating. Since there is an internal deadline I have to meet, I avoid the trap of time abundance.

From my experience here are a number of Do’s and Don’ts to follow while time-boxing:

- Don’t pack your day with work. Find ‘recharge time` in between tasks to recharge your batteries throughout the day. Coffee breaks, walks, meditation (more on that below), fooling around or simply sitting idle. I prefer 30 mins breaks between every 2 hours.

- Don’t aim for a lot in one day. Stick to three significant tasks for the day. Everything else is a bonus.

- Don’t be too rigid about the timings. Be flexible. They are boxes, they can be moved around!

- Don’t be fixed in a spot or fixated on a device. Try a change of scenery every few minutes. For example, after 2 hours of working on the laptop, avoid taking a break by watching a video on the same screen.

- Do revisit your old timeboxes. Improve your estimates by reflecting on older gues-stimates and comparing with the actual time

- Do recognize your work. At end end of the day:

- Tick off every task you finished to reward yourself for completing them.

- Write down things you did but did not plan for. Then tick them off.

Recommended Reading:

- Rest: Why You Get More Done When You Work Less: A required reading on why you need to plan your day with less productive activities than you think.

Mindfulness

I have been practicing mindful meditation for the past 3 years. It has been extremely effective in reducing my habit of procrastinating. It is an effective tool with surprising real world benefits.

Like many people, I had a lot of misconceptions about mediation. There are many kinds of meditation. The vipassana approach of meditation that I follow is:

- Non-religious - you are not chanting any mantras

- Not about concentrating hard - although it may improve your focus, the goals are different.

- Not time consuming - you can do just 10 minutes per day although I find 20 mins more effective.

- Not done in a perfectly silent environment - it is possible to meditate in any environment. If noise is affecting you in the beginning, get noise cancelling headphones or earplugs.

It might not be clear how meditation is effective in managing your work. I found it helps me respond rather than react to situations. It is like a superpower to slow down time (QuickSilver?). Then I can pause and objectively evaluate the situation.

For example, I will begin to notice that feeling of anxiety when I think of cleaning my desk. It is due to the fact I don’t have my bookshelf organized to keep all the books lying around. Organizing my small bookshelf means donating some books and that means connecting with my bibliophile friend whom I haven’t spoken to in a while. Instead I decided to take a break (and relieve my anxiety) to watch a comedy special on Netflix. Sounds like a lot to unpack doesn’t it?

This brings us to the question that we never answered - Why do we procrastinate? Unlike what experts used to think, it is not a self-discipline problem. It is mostly an emotional problem. There are different kinds of procrastination like passive and active types. So it is important to introspect and understand what is blocking you. Mindfulness helps you immensely in identifying the root cause.

Recommended reading:

- Mindfulness in Plain English - Best introduction to mindful meditation

- The Psychology of Procrastination: Understand Your Habits, Find Motivation, and Get Things Done - Great book to understand the modern scientific view of the causes of procrastination

Summary

Procrastination should not be seen as the problem but a symptom. It needs to be addressed or it will start preventing you from reaching your goals. I have a few techniques that have worked for me over the last few years. I am reasonably productive following them diligently. Hope you will be too.

Some affiliate links are present in this article. Would not impact you but would help sustain the blog.